|

2/29/2024 0 Comments Splunk hec url

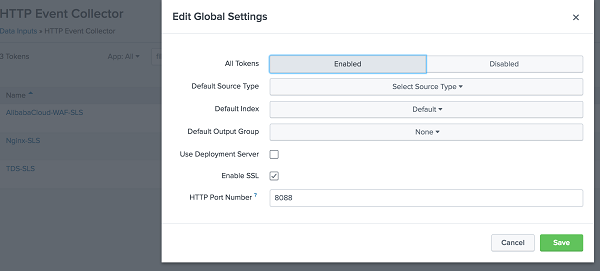

MAX_TIMESTAMP_LOOKAHEAD = 10 # makes sure account_id is not used for timestamp For more information regarding these configurations, refer to the Splunk nf documentation. Copy the contents below in nf in $SPLUNK_HOME/etc/system/local/. Next, we need to add configurations within the Splunk server under nf to verify that line breaking, time stamp, and field extractions are configured correctly. Once the Token has been created, choose Global Settings, verify that All Tokens have been enabled, and click Save.Verify the Source Type is set as aws:cloudwatchlogs:vpcflow.įigure 3 – Splunk HEC token configuration. Configure the new token as per the details shown in Figure 3 below and click Submit.Select HTTP Event Collector and choose New Token.Access Splunk web, go to Settings, and choose Data inputs.To get started, we need to set up Splunk HEC to receive the data before we can configure the AWS services to forward the data to Splunk. Step 1: Splunk HTTP Event Collector (HEC) Configuration Create an index in Splunk to ingest the VPC flow logs.Publish VPC flow logs to Amazon S3 – Configure VPC flow logs to be published to an S3 bucket within your AWS account.The following prerequisites exist at a minimum: If Splunk is unavailable, or if any error occurs while forwarding logs, the Lambda function forwards those events to a backsplash S3 bucket.VPC flow logs are ingested and are available for searching within Splunk.The Lambda function streams the filtered VPC flow logs to Splunk HTTP Event Collector.The Lambda function filters out the events that do not have the “action” flag as “REJECT”. The function then makes a “GetObject” call to the S3 bucket and retrieves the object.This function polls the messages from SQS in batches, reads the contents of each event notification, and identifies the object key and corresponding S3 bucket name. A Lambda function is created with Amazon SQS as event source for the function.The S3 bucket sends an “object create” event notification to an Amazon Simple Queue Service (SQS) queue for every object stored in the bucket.VPC flow logs for one or multiple AWS accounts are centralized in a logging S3 bucket within the log archive AWS account.The architecture diagram in Figure 1 illustrates the process for ingesting the VPC flow logs into Splunk using AWS Lambda.įigure 1 – Architecture for Splunk ingestion using AWS Lambda. Leading organizations use Splunk’s unified security and observability platform to keep their digital systems secure and reliable. Splunk is an AWS Specialization Partner and AWS Marketplace Seller with Competencies in Cloud Operations, Data and Analytics, DevOps, and more. We’ll provide instructions and a sample Lambda code that filters virtual private cloud (VPC) flow logs with “action” flag set to “REJECT” and pushes it to Splunk via a Splunk HTTP Event Collector (HEC) endpoint. The push mechanism offers benefits such as lower operational overhead, lower costs, and automated scaling. This post showcases a way to filter and stream logs from centralized Amazon S3 logging buckets to Splunk using a push mechanism leveraging AWS Lambda. The pull-based log ingestion approach currently does not offer a way to achieve that. An example of this is ingesting only the rejected traffic within the VPC flow logs where the field “action” = “REJECT”. This approach involves an additional overhead of managing the deployment, and there’s an increased cost for running this dedicated infrastructure.Ĭonsider another use case where you want to optimize ingest license costs in Splunk by filtering and forwarding only a subset of logs from the S3 buckets to Splunk. These servers also need an ability to scale horizontally as the data ingestion volume increases in order to support near real-time ingestion of logs. This is deployed on Splunk Heavy Forwarders, which act as dedicated pollers to pull the data from S3. In order to ingest the logs from S3 buckets in Splunk, customers normally use the Splunk add-on for AWS. The volume of logs stored in these centralized S3 buckets can be extremely high (multiple TBs/day) depending on the number of AWS accounts and the size of workload. Following the prescriptive guidance from AWS for multi-account management, customers typically choose to perform centralization of the AWS log sources (AWS CloudTrail logs, VPC flow logs, AWS Config logs) from their multiple AWS accounts within Amazon Simple Storage Service (Amazon S3) buckets in a dedicated log archive account. Solutions Architect – AWSĪmazon Web Services (AWS) customers of all sizes–from growing startups to large enterprises–manage multiple AWS accounts. By Ameya Paldhikar, Partner Solutions Architect – AWSīy Marc Luescher, Sr.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed